AI’s Capability Is Already Here — Leadership Work Is What Puts It to Work

There’s a number in Anthropic’s latest research every enterprise leader should be excited about: even in the work where AI is most ready to help, businesses today are tapping only a fraction of what’s possible. That gap is the opportunity of the next decade — and it’s leadership work, not technology work, that closes it.

The size of the gap tells leaders something specific. The hard part of putting AI to work isn’t building smarter models — capability is largely already here. The hard part is the structural work of an enterprise: how decisions move, where context lives, what’s governed and what’s open, and which people are equipped to direct the technology rather than chase it. That’s the work that turns capability into value. And it’s the work that defines the leaders who will come out of this decade ahead.

What this actually looks like

Picture two enterprises a year from now. Both have AI tools. Both have spent on capability, on infrastructure, on training. One is using AI almost everywhere — and it shows. Workflows that used to take days take hours. Decisions that used to need three meetings happen in one. Knowledge that used to live in the heads of senior people is suddenly available to the whole organisation. The leaders are not busier; they are more leveraged.

The other enterprise has the same tools and is barely moving. AI sits in chat windows on individual desks. Each team experiments in isolation. The hard organisational questions — who owns an AI’s decisions, where does context come from, how do you keep AI useful as the business changes — never get asked at the right altitude. The technology is in place; the structure to put it to work is not.

The difference between the two is not the model. It is not even the budget. It is the leadership work that decides how AI shows up in the operating model — what it is trusted with, where it sits in the flow of decisions, how the business stays coherent as the pace accelerates. That work does not get done by an AI vendor. It does not get done by an integrator. It gets done by leaders who treat AI adoption as a structural question, not a technical one.

That is the picture. And it is the picture the data is quietly pointing at.

What Anthropic actually measured

In March 2026, Anthropic’s economics team published one of the most careful pieces of measurement work yet on AI in the workplace. The paper — Labor market impacts of AI: A new measure and early evidence 1 — introduced a metric called observed exposure: the share of an occupation’s tasks that AI is actually doing in real professional use, measured against what AI is theoretically capable of doing.

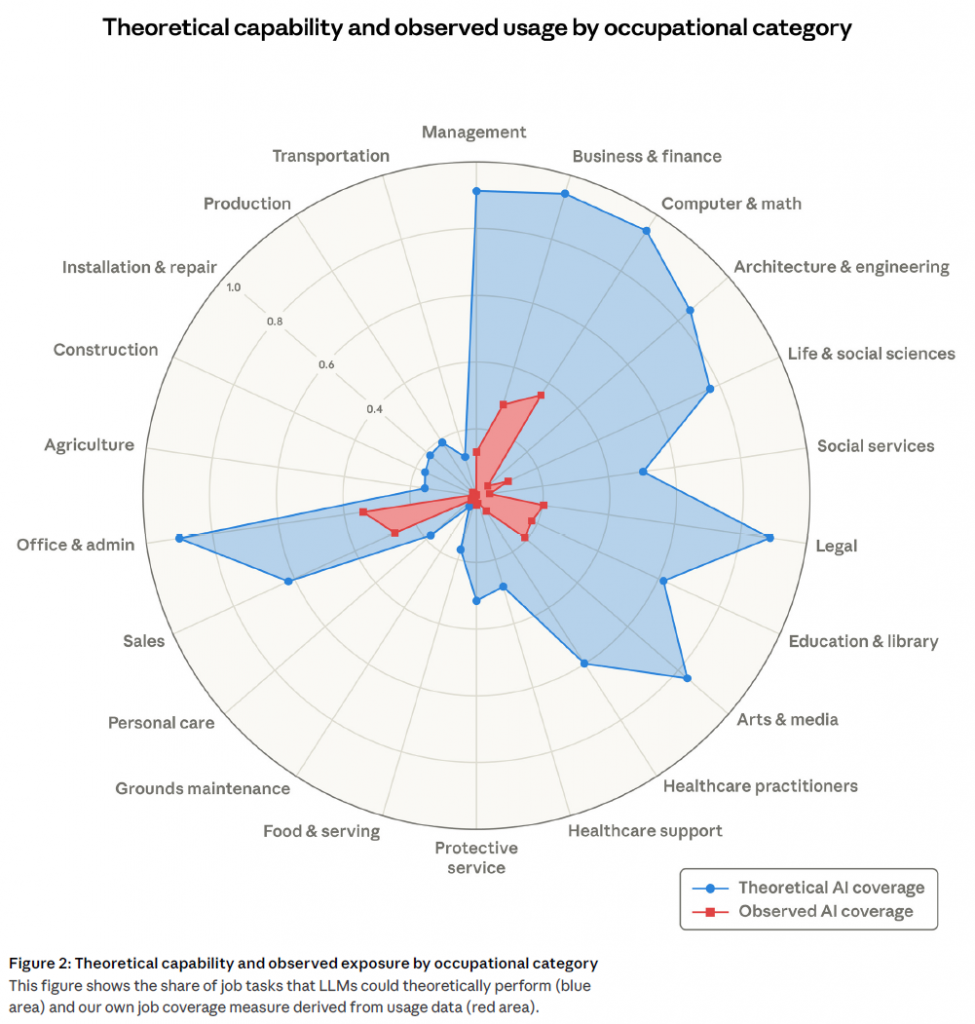

The headline finding: across nearly every white-collar occupational category, the gap between theoretical capability and observed use is enormous. In computer and math occupations, AI is theoretically feasible for 94% of tasks; observed coverage sits at 33%. Office and administrative roles show a similar shape. Even in the most-exposed individual occupations — computer programmers at 75%, customer service representatives at 70%, data entry at 67% — the work AI is actually doing is well below what AI could do.

97% of observed Claude usage already falls into tasks the underlying capability research had classified as feasible. The model is not the bottleneck.

Chart — Theoretical capability vs observed coverage by occupational category. Adapted from Anthropic, March 2026.

That is the gap. It is wide, it is measured, and it is the central fact every serious framing of this work agrees on. Anthropic’s own researchers describe the unused capacity as a function of integration constraints, verification requirements, and the work of fitting AI into existing professional workflows — not any shortfall of model ability. The gap exists because AI capability and enterprise readiness are not the same thing, and enterprise readiness is leadership work. Read this way, the data is not a warning. It is a map of the work that is actually ahead.

You can already see this in the market

The reading we have laid out is not ours alone. Across the analyst firms and business research outlets tracking AI’s enterprise shape, the same pattern is surfacing in different vocabularies.

Anthropic’s own broader research arc is part of it. The company’s Economic Index series 2 — separate from the labour-market paper but adjacent to it — is mapping Claude usage as economic data, looking at adoption patterns by geography, enterprise size, and task type. The picture that is emerging is not AI will do this work for you. It is AI is becoming an instrument organisations have to learn to play, and most have not yet.

Harvard Business Review, in March 2026, named the same phenomenon the Last Mile Problem: *”Few companies have been able to fundamentally change their operating and business models around AI.”* 3 Adoption is widespread; transformation is rare. McKinsey‘s State of AI puts numbers on the same shape — 60% of organisations have not seen enterprise-wide EBIT impact from their AI programmes, and the gap between AI activity and AI impact, in McKinsey’s words, *”only seems to be widening.”* 4 Two-thirds of organisations have not even begun scaling AI across the enterprise.

BCG describes the phenomenon directly as the Widening AI Value Gap. 5 Despite a tripling of AI scaling activity from 9% of companies in 2024 to 28% in 2025, only a small minority of those investing heavily actually capture strong returns. 90% of executives BCG surveyed cite competing priorities as the top blocker; 76% cite unclear ROI. The mismatch is not a story of capability shortfall. It is a story of how enterprises are organising around the technology — or failing to.

Gartner‘s 2026 outlook on agentic AI lands on the same diagnosis from the analyst angle. The firm forecasts that 40% of enterprise applications will integrate task-specific AI agents by 2026, up from less than 5% in 2025 6 — and projects that more than 40% of agentic AI projects will be cancelled by 2027, citing escalating costs, unclear business value, and inadequate risk controls as the primary causes. 7 Gartner’s analysts increasingly frame the decisive variable as enterprise readiness — governance, foundational capabilities, operating-model maturity — rather than agent intelligence itself. The pattern is consistent: the projects that struggle struggle on operating-model grounds, not capability grounds.

The lens is gaining ground across the institutional analysts. But what does it look like on the ground?

But what does this look like on the ground?

Analyst signals and institutional research give one read of the AI moment. What practitioners actually report — from doing the work day-to-day, inside real teams, on real systems — gives another. Both matter, and they point in the same direction.

The 2025 Stack Overflow Developer Survey 8 — the most widely-cited annual reading of how working developers actually use technology — finds 84% of developers using or planning to use AI in their work. But beneath the headline, two-thirds describe their biggest frustration as AI solutions that are almost right, but not quite. Only 29% say they trust AI tools today, down eleven percentage points from a year earlier. More than half of developers either don’t use AI agents at all or stick to the simpler tools, even where the more powerful options are technically available. The capability is in their hands; what’s missing is the integration, verification, and trust scaffolding that turns capability into reliable output.

Practitioner reality inside IT service management tells a similar story. ITSM.tools’ 2026 State of AI in IT report 9 finds AI adoption near-universal — only 2% of IT teams report no AI use, and 67% describe their AI ROI as positive. But trust remains cautious: just 16% grant AI full autonomous decision-making, with most teams maintaining a deliberate human-in-the-loop posture. The report’s framing is sharp: ITSM teams are *”deploying AI defensively rather than transformatively — approving use cases while maintaining human checkpoints.”* Capability is in the toolset; the work of trusting, governing, and integrating it is what’s pacing the deployment.

The same pattern shows up at the CEO level inside the AI infrastructure stack. Speaking to The Register in early 2026, Redis CEO Rowan Trollope said: *”I’ve seen fewer examples of real successful production agents than I would have imagined. It is still quite hard to do, and only the biggest companies in the world understand this is the future.”* 10 In the same piece, Gartner observed that enterprise AI agent rollouts have moved into the *”trough of disillusionment”* — the same pivot from *”that was a great idea”* to *”where’s my revenue?”* that any seasoned IT leader has watched play out several times in their career.

The friction is not in capability. It is in the integration, governance, and operating-model work that turns capability into reliable, valuable outcomes. That friction is exactly where structural attention pays off.

What this means for enterprise leaders

Step back, and the picture is clearer than the surrounding noise around AI suggests.

The most rigorous data we have shows that AI’s real workplace footprint is far smaller than its capability allows. The voices working hardest to make sense of that data — researchers, analysts, practitioners, business strategists, and the IT leaders running the rollouts — are increasingly pointing at the same answer: the gap closes when leaders treat AI as a structural question. How decisions flow. Where context lives. What is governed and what is open. Who is equipped to direct the technology, and who is catching up.

That convergence is also what we see in our own work. Across our ongoing tracking of vendor, analyst, and practitioner signals in enterprise IT, the pattern is consistent: wherever the gap is measured, structure is the variable that explains it.

That is not a warning. It is an invitation. The leaders who put their organisations through that structural work in the next few years will compound their advantage steadily — and quietly — while the rest of the market is still arguing about which model is best.

The technology is ready. The opportunity is sized. The work is leadership work, and it is already underway in the organisations that will define the next decade of enterprise IT.

That is where we would encourage leaders to look. And it is where we are putting our own work.

By Kristina Petrić and Michel Conter

At Conter.biz, we work with enterprise leaders on architecture, service-management, and operating-model practices that turn AI capability into real organisational value.

Sources

- Anthropic — Labor market impacts of AI: A new measure and early evidence, by Maxim Massenkoff and Peter McCrory. March 5, 2026. https://www.anthropic.com/research/labor-market-impacts ↩︎

- Anthropic — Anthropic Economic Index (research hub). https://www.anthropic.com/economic-index ↩︎

- Harvard Business Review — The “Last Mile” Problem Slowing AI Transformation. March 2026. https://hbr.org/2026/03/the-last-mile-problem-slowing-ai-transformation ↩︎

- McKinsey & Company — The state of AI: How organizations are rewiring to capture value. https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai-how-organizations-are-rewiring-to-capture-value ↩︎

- Boston Consulting Group — The Widening AI Value Gap. September 2025. https://media-publications.bcg.com/The-Widening-AI-Value-Gap-Sept-2025.pdf ↩︎

- Gartner — Gartner Predicts 40% of Enterprise Apps Will Feature Task-Specific AI Agents by 2026, Up from Less Than 5% in 2025. August 26, 2025. https://www.gartner.com/en/newsroom/press-releases/2025-08-26-gartner-predicts-40-percent-of-enterprise-apps-will-feature-task-specific-ai-agents-by-2026-up-from-less-than-5-percent-in-2025 ↩︎

- Gartner — Gartner Predicts Over 40% of Agentic AI Projects Will Be Canceled by End of 2027. June 25, 2025. https://www.gartner.com/en/newsroom/press-releases/2025-06-25-gartner-predicts-over-40-percent-of-agentic-ai-projects-will-be-canceled-by-end-of-2027 ↩︎

- Stack Overflow — 2025 Developer Survey. https://survey.stackoverflow.co/2025/ ↩︎

- ITSM.tools — The State of AI in IT for 2026: Adoption, Trust, ROI, and Barriers, by Stephen Mann. December 22, 2025. https://itsm.tools/state-of-ai-in-it-2026/ ↩︎

- The Register — Enterprise AI agent rollouts slow outside the lab, by Lindsay Clark. January 28, 2026. https://www.theregister.com/2026/01/28/ai_agents_redis/ ↩︎